Overview

About the solution

Cisco rebranded Data Center Network Manager (DCNM) as Nexus Dashboard Fabric Controller (NDFC) 12.

Cisco Nexus Dashboard Fabric Controller (NDFC) offers a network management system (NMS), support for traditional or multiple-tenant LAN, and SAN fabrics on a single window. Cisco NDFC can:

- Work across all Cisco Nexus and MDS switching families

- Manage large numbers of devices while providing ready-to-use control, management, and automation capabilities including Virtual Extensible LAN (VXLAN) control and automation for Cisco Nexus LAN fabrics.

- Support automatic configuration for multi-tenant automation.

- Offer integrated storage visualization, provisioning, and troubleshooting.

- Offer intuitive, multifabric topology views for LAN fabric and storage

- Integrate with Cisco UCS Director, vSphere, and OpenStack.

NDFC

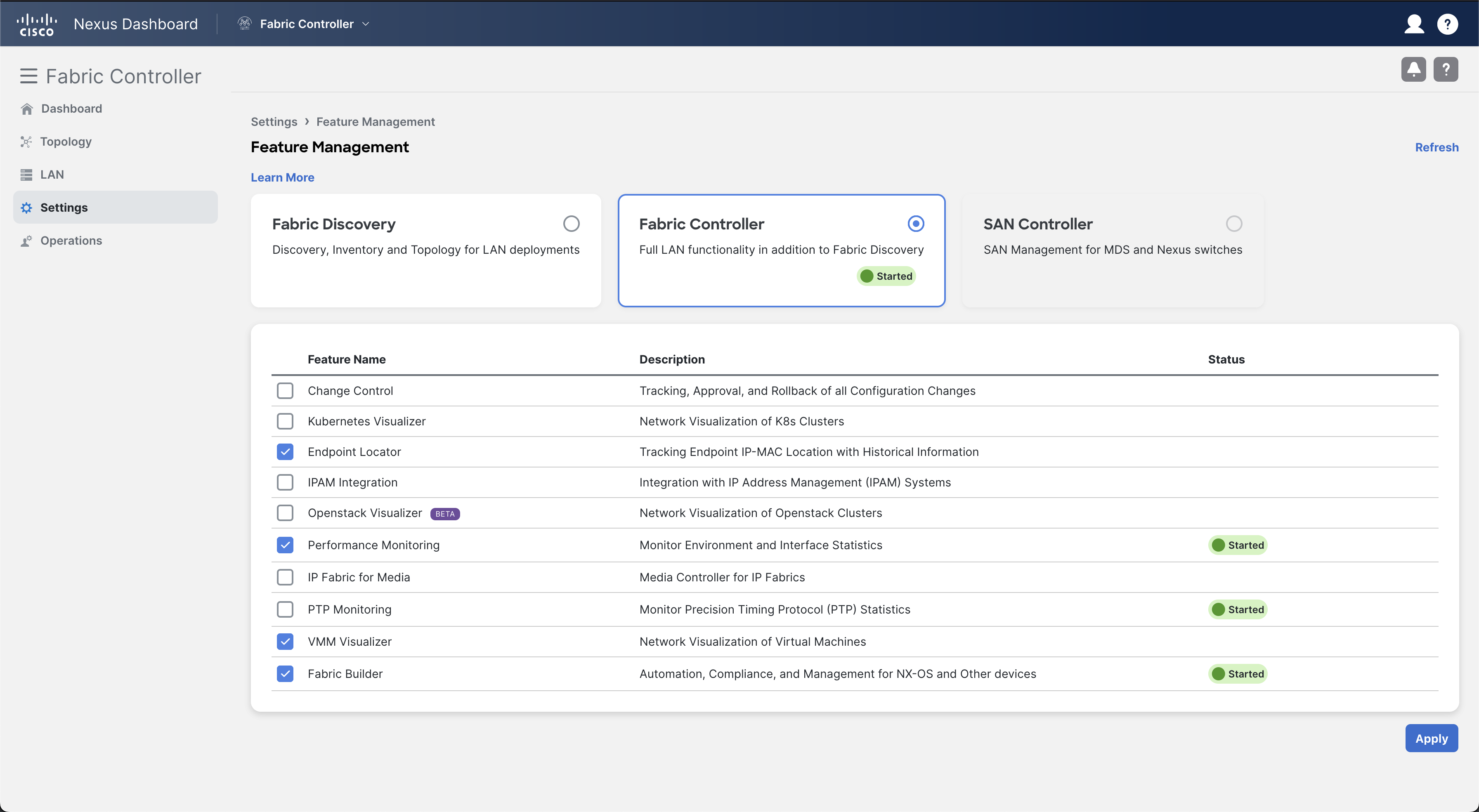

NDFC Feature Management lets you dynamically enable feature sets and scale applications. The first time that you start NDFC from the Cisco Nexus Dashboard, NDFC displays the Feature Management screen. If you do not configure the installer and features at this point, NDFC Feature Management only performs backup and restore operations. To enable features later, select the Feature Management option in Settings as shown in the following image.

Info

This demo already has the required NDFC features enabled. You do not need to enable any of the features but can view the features in the Feature Management screen.

VXLAN EVPN

Virtual Extensible LAN (VXLAN) is an overlay technology for network virtualization. It provides a Layer 2 extension over a shared Layer 3 underlay infrastructure network by using a MAC address in IP User Datagram Protocol (MAC in IP or UDP) tunneling encapsulation. The purpose of obtaining a Layer 2 extension in the overlay network is to overcome the limitations of physical server racks and geographical location boundaries to achieve flexibility for workload placement within a data center or between different data centers.

The initial IETF VXLAN standards (RFC 7348) defined a multicast-based flood-and-learn VXLAN without a control plane. It relies on data-based flood-and-learn behavior for remote VXLAN tunnel endpoint (VTEP) peer discovery and remote end-host learning. The overlay broadcast, unknown unicast, and multicast traffic are encapsulated into multicast VXLAN packets and transported to remote VTEP switches through underlay multicast forwarding. Flooding in such a deployment can present a challenge for the scalability of the solution. The requirement to enable multicast capabilities in the underlay network also presents a challenge because some organizations do not want to enable multicast in their data centers or WAN networks.

To overcome the limitations of the flood-and-learn VXLAN as defined in RFC 7348, organizations can use Multiprotocol Border Gateway Protocol (MP-BGP) Ethernet Virtual Private Network (EVPN) as the control plane for VXLAN. IETF defines MP-BGP EVPN as the standards-based control plane for VXLAN overlays. The MP-BGP EVPN control plane provides protocol-based VTEP peer discovery and end-host reachability information distribution that allows more scalable VXLAN overlay network designs suitable for private and public clouds. The MP-BGP EVPN control plane introduces a set of features that reduces or eliminates traffic flooding in the overlay network and enables optimal forwarding for both east-west and north-south traffic.

The VXLAN EVPN Multi-Site feature is a solution to interconnect two or more BGP-based Ethernet VPN (EVPN) site's fabrics in a scalable fashion over an IP-only network. The Border Gateway (BG) is the node that interacts with nodes within a site and with nodes that are external to the site. For example, in a leaf-spine data center fabric, it can be a leaf, a spine, or a separate device acting as a gateway to interconnect the sites.

VXLAN Multi-Site Use Cases

VXLAN EVPN Multi-Site architecture is a design for VXLAN BGP EVPN–based overlay networks. It allows interconnecting of multiple distinct VXLAN BGP EVPN fabrics or overlay domains. It also allows new approaches to fabric scaling, compartmentalization, and DCI.

When you build one large data center fabric per location, various challenges that are related to operation and failure containment exist. By building smaller compartments of fabrics, you improve the individual failure and operation domains. The complexity of interconnecting these various compartments precludes the pervasive rollout of such concepts, specifically when you must extend Layer 2 and Layer 3.

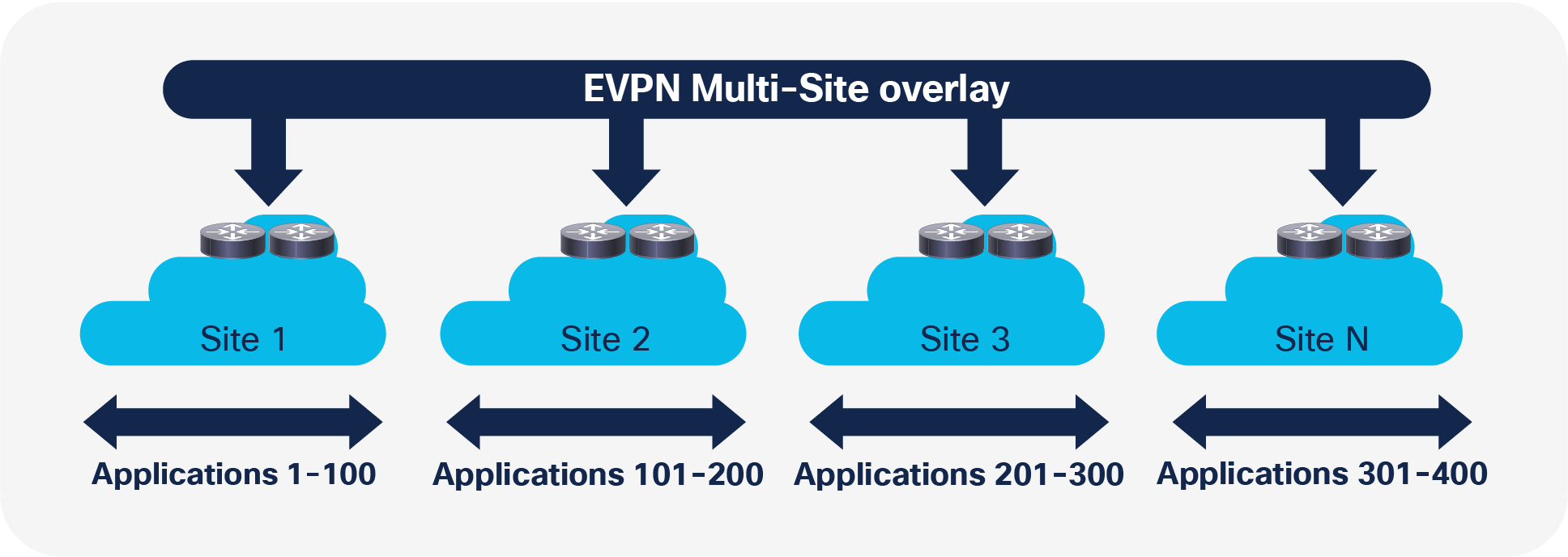

Compartmentalization Example

VXLAN EVPN Multi-Site architecture provides integrated interconnectivity that doesn’t require other technology for Layer 2 and Layer 3 extensions. It offers the possibility of seamless extension between compartments and fabrics. It also allows you to control what you extend. In addition to defining which VLAN or Virtual Routing and Forwarding (VRF) instances to extend within the Layer 2 extensions, you can also control broadcast, unknown unicast, and multicast (BUM) traffic to limit the ripple effect of a failure in a fabric.

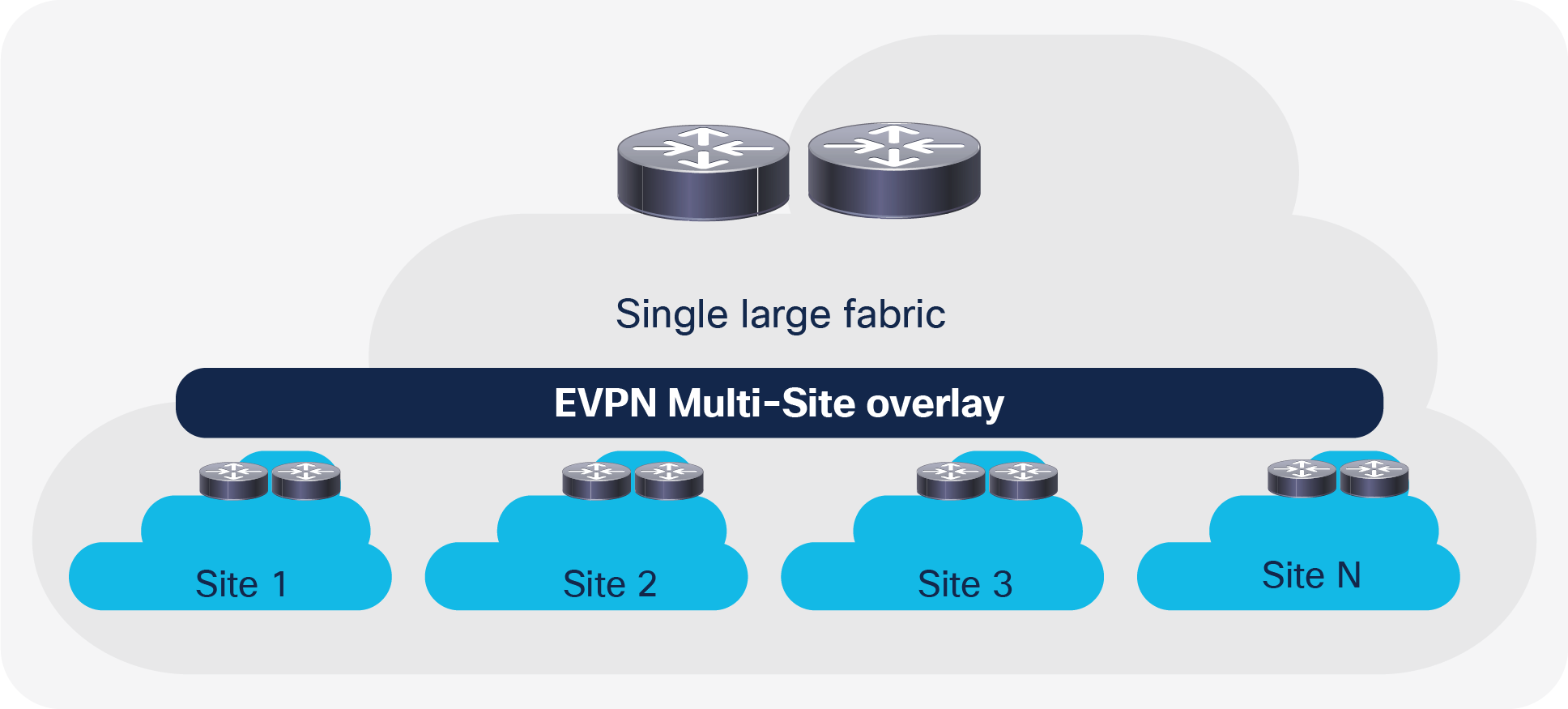

When you build networks using the scale-up model, one device or component typically reaches the scale limit before the network does. The scale-out approach offers an improvement for data center fabrics. Nevertheless, a single data center fabric also has scale limits, and thus the scale-out approach for a large data center fabric exists.

In addition to the option to scale out a single fabric, with the EVPN Multi-Site architecture, you can scale out to the next level of the hierarchy. As you add more leaf nodes for capacity within a data center fabric, in the EVPN Multi-Site architecture you can add fabrics (sites) to horizontally scale the overall environment. With this scale-out approach in the EVPN Multi-Site architecture, in addition to increasing the scale, you contain the full-mesh adjacencies of VXLAN between the VXLAN tunnel endpoints (VTEPs) in a fabric.

Scale Example

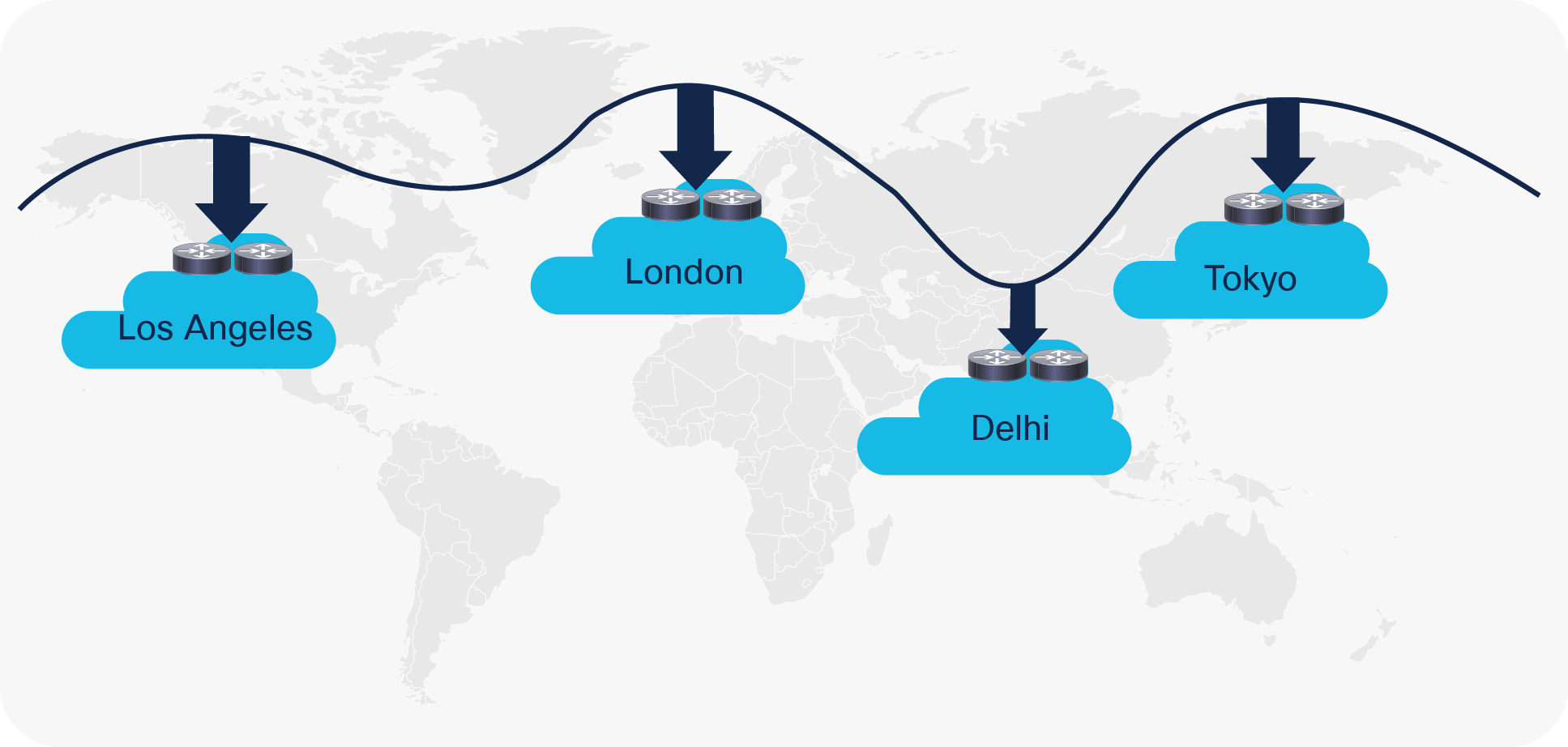

You can also use the EVPN Multi-Site architecture for DCI scenarios. As with the compartmentalization and scale-out within a data center, the EVPN Multi-Site architecture was built with DCI in mind. The overall architecture allows you to position and interconnect single or multiple sites per data center with single or multiple sites in a remote data center. With seamless and controlled Layer 2 and Layer 3 extension, by using VXLAN BGP EVPN within and between sites, you've increased the capabilities of VXLAN BGP EVPN itself. The new functions that are related to network control, VTEP masking, and BUM traffic enforcement are only some of the features that help make EVPN Multi-Site architecture the most efficient DCI technology.

DCI Example